| AI in cybersecurity is now both the most significant threat and the most important defence available to NZ businesses. Hackers use AI to launch attacks that are faster, more convincing, and harder to detect than anything possible before 2024. The same technology also powers the most effective defences. Understanding which side is winning — and how — is the starting point for protecting your business. |

The original version of this guide covered ten ways hackers were using AI to attack businesses. In the 18 months since it was published, the threat has advanced to a point where those ten methods feel like the baseline rather than the frontier.

Agentic AI attack systems — fully autonomous tools that plan, execute, and adapt entire attack campaigns without human operators — have moved from theoretical to operational. LLM-powered malware that generates new code at runtime to evade detection has gone from proof-of-concept to documented deployment. And AI-driven ransomware campaigns now automate functions that previously required entire teams of skilled attackers.

This updated guide covers where AI in cybersecurity has moved since 2024, what the current attack methods look like, and what NZ businesses can do to match the threat with equivalent defensive capability.

| IBM X-Force 2026 Threat Index: 49% increase in active ransomware groups in 2025. Infostealer malware exposed over 300,000 ChatGPT credentials. AI has collapsed the human response window and turned remote access into the fastest path to breach. Cybercrime frequency and severity approximately doubled through 2025. |

How AI Has Changed the Cybersecurity Threat Landscape

The most important shift in AI in cybersecurity is not a single new attack method. It is a structural change in how attacks are resourced, executed, and scaled.

Before AI, a sophisticated cyberattack required skilled operators for each stage: reconnaissance, social engineering, vulnerability exploitation, lateral movement, and exfiltration. Each stage was a bottleneck. Attacks were constrained by the number of skilled humans available to run them.

AI removes those bottlenecks. Reconnaissance that took a skilled analyst days can now be automated in hours. Phishing emails that required a native-speaker social engineer to craft are now generated at scale by large language models. Malware that a development team spent weeks building can now be generated dynamically at runtime. The skill ceiling has dropped and the attack volume ceiling has risen dramatically.

The result is a threat environment in which the speed, volume, and sophistication of attacks has outpaced the detection and response capabilities of most NZ businesses operating without AI-powered defences. Our guide on cyber resilience covers how to build an organisation that can absorb and respond to this new attack tempo.

The Current AI Attack Methods Targeting NZ Businesses

The following table covers the AI attack methods currently operational in 2026, how they work, and what they mean specifically for NZ businesses.

| AI Attack Method | How It Works | What It Means for NZ Businesses |

| AI-powered phishing | LLMs generate personalised, grammatically perfect messages at scale, tailored to each recipient using scraped public data | 54% click-through rate vs 12% for traditional phishing. Every NZ business receiving email is being targeted |

| Voice cloning vishing | AI clones executive voices from 3 seconds of audio for fraudulent phone calls requesting payments or credentials | Finance teams are primary targets. NZ geography means many businesses have small, familiar teams easy to impersonate |

| Deepfake video attacks | Real-time video deepfakes impersonate executives in live video calls requesting financial authorisation | One NZ-relevant case: a finance employee authorised US$25M after a call where every other participant was a deepfake |

| Polymorphic AI malware | LLM-powered malware generates new code variants at runtime, producing unique signatures that evade signature-based detection | Traditional antivirus cannot catch what it has never seen. Endpoint detection and response (EDR) is now the baseline requirement |

| Agentic AI attacks | Fully autonomous AI systems plan, execute, and adapt entire attack campaigns with minimal human oversight | AI agents carrying out 80-90% of each operation represent a fundamentally new threat level. Speed of attack has no human bottleneck |

| Automated vulnerability scanning | AI scans and probes networks at machine speed, identifying exploitable weaknesses faster than patches can be applied | Unpatched systems are found and exploited within hours of a vulnerability being published. Patch cycles measured in weeks are no longer sufficient |

| AI supply chain attacks | Compromised AI agents and LLM integrations used as vectors to access connected systems, databases, and APIs | Businesses adopting AI tools without security assessment are creating new attack surfaces they do not know exist |

| Credential harvesting at scale | AI-powered infostealer malware systematically harvests credentials from browsers, apps, and AI platforms | Over 300,000 ChatGPT credentials were exposed by infostealer malware in 2025. AI platform credentials carry unique risk |

The Emergence of Agentic AI Attacks

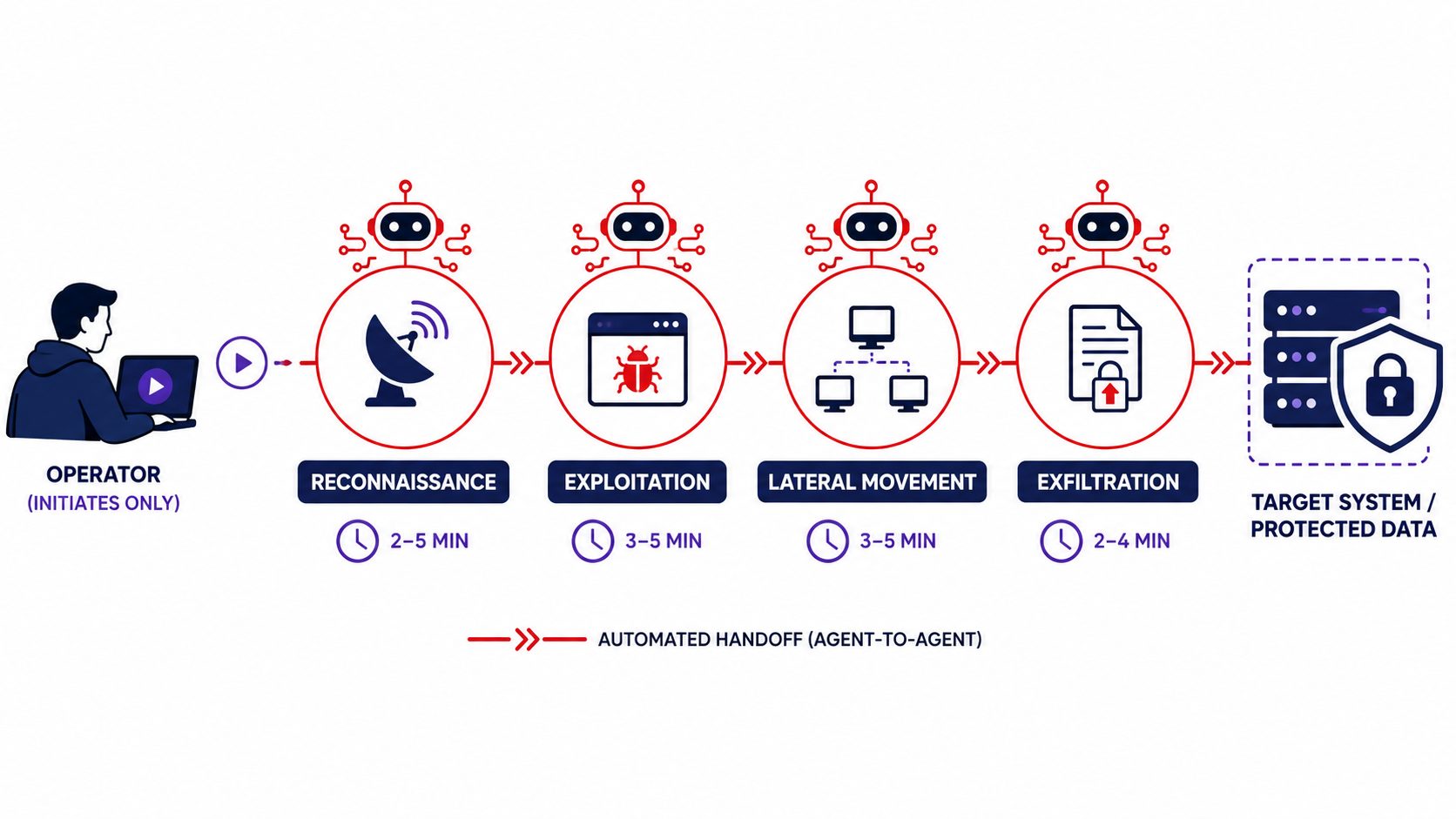

Agentic AI is the most significant development in AI in cybersecurity since large language models became accessible in 2023. It deserves specific attention because it represents a qualitative shift in the threat, not just a quantitative one.

What agentic AI attacks are

An agentic AI attack system is an autonomous programme that can plan, execute, and adapt an entire attack campaign — reconnaissance, social engineering, vulnerability exploitation, lateral movement, and data exfiltration — without requiring a human operator at each step. It uses reinforcement learning to adjust its approach based on what is working and what is not, in real time.

Security researchers have documented cases where AI agents carried out 80 to 90% of each operation with human operators intervening at only four to six key decision points per intrusion. The implication is direct: attack campaigns that previously required entire teams of skilled operators can now be run by a small team leveraging AI agents, at a fraction of the previous cost and at machine speed.

Why this changes the defensive requirement

When attacks are automated and adaptive, defences must be automated and adaptive in response. A security team that detects anomalies manually, escalates through human decision chains, and responds in hours is operating at a speed mismatch against an attacker whose system responds in seconds.

This is why AI-powered detection — tools that identify anomalous behaviour in real time and trigger automated responses before an attacker reaches critical systems — has moved from a large-enterprise capability to a baseline requirement for any NZ business that wants meaningful protection. Our managed IT services include 24/7 automated monitoring precisely because human-response-speed detection is no longer adequate against the current threat.

LLM-Powered Malware: The End of Signature-Based Detection

Signature-based detection — identifying malware by matching known patterns in its code — has been the foundation of antivirus and security tools for decades. LLM-powered polymorphic malware has broken that model.

MalTerminal, the earliest known GPT-4-powered malware, generates ransomware or reverse-shell code at runtime — producing a unique payload each time it runs. Every execution generates a signature that has never been seen before. Traditional antivirus databases cannot contain what does not yet exist.

Security researchers from SentinelOne have documented multiple emerging malware families using this approach, including ESET’s PromptLock sample and campaigns known as LameHug and PromptSteal. These tools blur the line between code and conversation, allowing malicious logic to be generated dynamically based on the specific target environment.

The defensive response is behaviour-based detection rather than signature matching. Endpoint detection and response (EDR) tools monitor what a programme does rather than what it looks like. When ransomware begins encrypting files at unusual speed, or when a process starts accessing credential stores it has never touched before, EDR detects and isolates the threat regardless of whether its signature has been seen before. This is covered in more detail in our defence in depth guide.

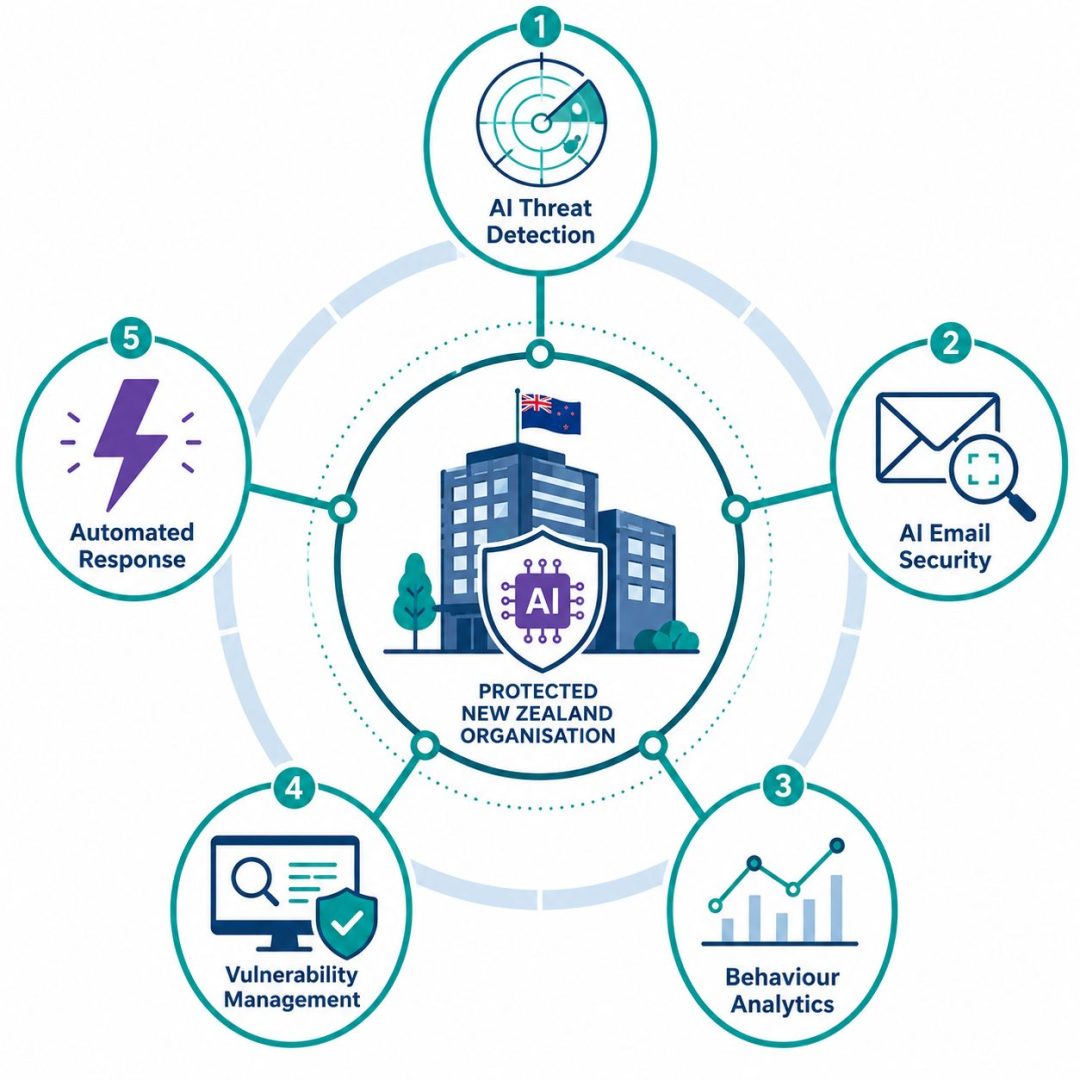

AI in Cybersecurity: How Businesses Can Use It Defensively

The same technology that makes attacks more dangerous also powers the most effective defences available. AI in cybersecurity is not only a threat — it is the most practical response to a threat environment that human-speed processes alone cannot match.

AI-powered threat detection and response

AI-driven security tools monitor network traffic, endpoint behaviour, user activity, and access patterns continuously, identifying anomalies that indicate an attack in progress. They can detect the behavioural signatures of an AI-powered attack — unusual enumeration activity, rapid file access patterns, credential queries from unexpected sources — faster than any human analyst reviewing logs.

Automated response capability means that when an anomaly is detected, affected systems can be isolated, suspicious processes terminated, and alerts escalated before a human analyst has even received the notification. Against an agentic AI attack operating at machine speed, automated response is the only proportionate countermeasure.

AI-enhanced email security

Standard email filters trained on historical phishing patterns cannot reliably detect AI-generated phishing that has never been seen before. AI-powered email security tools analyse message content, sender behaviour, structural patterns, and contextual signals in real time to identify AI-generated phishing with significantly higher accuracy than rule-based filters.

AI-assisted vulnerability management

Automated vulnerability scanning tools use AI to continuously assess your environment for exploitable weaknesses, prioritise them by risk, and recommend remediation in order of urgency. Against attackers using AI to scan for vulnerabilities at machine speed, manual or infrequent vulnerability assessment is not adequate.

Behavioural analytics for insider threat and credential compromise

AI-powered user and entity behaviour analytics (UEBA) establish a baseline of normal behaviour for each user and system, then flag deviations that indicate compromised credentials or insider activity. When a user account suddenly accesses systems it never touches, downloads data at unusual volume, or operates outside its normal hours, UEBA detects it within minutes. This connects directly to the credential risk highlighted in the IBM X-Force data — stolen AI platform credentials carry specific risks that traditional access monitoring was not designed to catch. Our dark web monitoring service provides early warning when your credentials have already been compromised.

What NZ Businesses Should Do Right Now

The AI threat landscape has advanced beyond what most NZ businesses have built their defences around. These are the priority actions based on where the actual attacks are landing in 2026.

Audit your AI tool exposure

Every AI tool your staff use — ChatGPT, Copilot, Gemini, or any other platform — represents a credential attack surface. Infostealer malware specifically targets AI platform credentials because compromised access to AI tools can be used for data exfiltration, prompt injection, and manipulation of AI-assisted workflows. Audit which AI tools your team uses, ensure MFA is enforced on all of them, and confirm your monitoring covers these platforms.

Move from signature-based to behaviour-based endpoint protection

If your endpoint security relies primarily on antivirus with signature-based detection, it cannot reliably catch LLM-powered polymorphic malware. Endpoint detection and response (EDR) that monitors behaviour rather than matching signatures is now the minimum standard for meaningful endpoint protection.

Implement AI-powered email security

Rule-based email filters are not adequate against AI-generated phishing at 54% click-through rates. AI-powered email security that analyses message content and sender behaviour in real time is the proportionate response to the current phishing threat.

Accelerate your patch cycle

Automated AI vulnerability scanning means that newly published vulnerabilities are being identified and exploited faster than ever. Patch cycles measured in weeks are now a meaningful risk window. Monthly patch management is the minimum — critical vulnerabilities should be patched within days.

Test your detection capability against AI attack patterns

Your security tools may detect traditional attacks reliably but miss the behavioural signatures of agentic AI attack systems. Testing your detection capability against realistic AI attack scenarios — through red team exercises or security assessments — tells you where your actual gaps are before an attacker finds them. Our cybersecurity risk assessment service can identify where your current defences have gaps against the 2026 threat landscape.

Is Your Cyber Security Keeping Pace With AI?

Exodesk delivers AI-aware cyber security to South Island businesses from our offices in Christchurch and Dunedin. Our managed security services include 24/7 automated monitoring, AI-powered email security, endpoint detection and response, and regular vulnerability management — updated continuously to reflect the current AI threat landscape rather than the one that existed when most NZ businesses last reviewed their defences.

If your current security stack was built before agentic AI attacks and LLM-powered malware became operational, it is not providing the protection you need. We offer an honest, no-obligation review of your current posture against the 2026 threat landscape.

Contact us today to discuss how we can help your business or connect with us on LinkedIn to stay updated with more insights.

Frequently Asked Questions About AI in Cybersecurity

What is AI in cybersecurity?

AI in cybersecurity refers to the use of artificial intelligence by both attackers and defenders. Attackers use AI to automate and scale phishing campaigns, generate polymorphic malware that evades detection, clone voices for fraudulent calls, and run autonomous attack campaigns. Defenders use AI for real-time threat detection, behavioural anomaly identification, automated incident response, and vulnerability management. In 2026, AI in cybersecurity is both the most significant threat and the most important defensive tool available to NZ businesses.

How do hackers use AI in cybersecurity attacks?

Hackers use AI to generate personalised phishing emails at scale, clone executive voices from seconds of audio, create deepfake video impersonations for financial fraud, build polymorphic malware that rewrites itself to evade detection, automate vulnerability scanning at machine speed, and run agentic attack campaigns that execute entire intrusion lifecycles with minimal human oversight. Each of these methods has moved from theoretical to operationally deployed against real targets in 2025 and 2026.

What is agentic AI and why is it a cybersecurity threat?

Agentic AI refers to autonomous AI systems that can plan, execute, and adapt complex tasks without human direction at each step. In cybersecurity, agentic AI attack systems can conduct entire intrusion campaigns — from initial reconnaissance through to data exfiltration — with AI agents carrying out 80 to 90% of each operation and human operators intervening only at key decision points. This removes the human bottleneck from attack operations, enabling attacks at a speed and scale that human-led operations cannot match.

What is polymorphic malware and how does AI make it more dangerous?

Polymorphic malware changes its code to avoid detection. AI-powered polymorphic malware takes this further by generating entirely new code variants at runtime using large language models, producing unique signatures on every execution. Traditional signature-based antivirus tools cannot detect payloads that have never existed before. The defence is behaviour-based endpoint detection and response, which monitors what a programme does rather than what it looks like.

How does AI phishing differ from traditional phishing?

Traditional phishing used generic templates with obvious red flags — poor grammar, suspicious links, and impersonal greetings. AI-powered phishing generates personalised, grammatically perfect messages tailored to each recipient using scraped public data about their role, colleagues, and recent activity. The messages are contextually accurate and indistinguishable from legitimate internal communication. This is why AI phishing achieves a 54% click-through rate compared to 12% for traditional attacks.

What are AI supply chain attacks?

AI supply chain attacks use compromised AI agents, LLM integrations, or AI platform access as vectors to reach connected systems, databases, and APIs. As businesses adopt AI tools and autonomous agents across their operations, each integration point represents a potential attack surface. Businesses that adopt AI tools without security assessment are creating new attack vectors they may not be aware of. Infostealer malware specifically targeting AI platform credentials — including over 300,000 ChatGPT credentials exposed in 2025 — makes AI tool access management a security priority.

How can AI be used defensively in cybersecurity?

AI-powered defences include automated threat detection that monitors behaviour rather than matching signatures, AI-enhanced email security that identifies AI-generated phishing in real time, user and entity behaviour analytics that flag anomalous access patterns indicating compromised credentials, automated vulnerability scanning that identifies exploitable weaknesses continuously, and automated incident response that isolates threats faster than human decision chains. Against AI-powered attacks operating at machine speed, AI-powered defences are the proportionate response.

Why can’t traditional antivirus stop AI-powered malware?

Traditional antivirus identifies malware by matching code against a database of known malicious signatures. LLM-powered polymorphic malware generates new code at runtime, producing signatures that have never existed before. There is nothing in the database to match against. The defence requires behaviour-based tools that detect what the malware does — accessing credential stores, encrypting files rapidly, establishing unusual network connections — rather than what it looks like.

How quickly are AI-powered vulnerabilities being exploited in 2026?

AI-automated vulnerability scanning can identify exploitable weaknesses within hours of a vulnerability being published. The window between a vulnerability becoming publicly known and active exploitation has compressed from weeks to days or hours in many cases. This is why monthly patch cycles are now a meaningful risk window for critical vulnerabilities. Organisations running quarterly patching are operating with unacceptable exposure against the current AI-assisted scanning threat.

Are NZ small businesses targeted by AI cybersecurity attacks?

Yes — and the targeting is increasing. AI-powered attacks are more cost-effective to run at scale, which means attackers can now profitably target smaller businesses that were previously considered too low-value to pursue manually. The 49% increase in active ransomware groups in 2025 was driven significantly by AI lowering the barrier to entry, bringing in smaller operators who target SMEs specifically because they tend to have weaker defences than enterprise targets.

What is the most important thing NZ businesses can do about AI cybersecurity threats?

The single most important action is moving from signature-based to behaviour-based endpoint protection. Signature-based tools cannot catch AI-generated polymorphic malware. After that, the priority actions are implementing AI-powered email security, accelerating your patch cycle for critical vulnerabilities, auditing your AI tool credential exposure, and ensuring you have 24/7 automated monitoring capable of detecting the behavioural signatures of agentic AI attack patterns.

How does Exodesk help NZ businesses defend against AI cybersecurity threats?

Exodesk delivers AI-aware managed security services to South Island businesses from our offices in Christchurch and Dunedin. This includes 24/7 automated monitoring with behaviour-based anomaly detection, AI-powered email security, endpoint detection and response, patch management, dark web credential monitoring, and regular security assessments against the current AI threat landscape. Our fixed-price managed IT model means your defences are continuously maintained and updated rather than reviewed annually against threats that change monthly.